Difference between revisions of "Workflow:Web Archiving Quality Assurance (QA) Workflow"

ClaireUKGWA (talk | contribs) |

ClaireUKGWA (talk | contribs) |

||

| Line 111: | Line 111: | ||

The National Archives is responsible for archiving UK central government owned websites. The archived archived websites are made available to the public through the UK Government Web Archive: https://www.nationalarchives.gov.uk/webarchive/ | The National Archives is responsible for archiving UK central government owned websites. The archived archived websites are made available to the public through the UK Government Web Archive: https://www.nationalarchives.gov.uk/webarchive/ | ||

| − | The QA workflow aims to enable us to work with our vendor (and sometimes our stakeholders) to achieve | + | The QA workflow aims to enable us to work with our vendor (and sometimes our stakeholders) to achieve 'state of the art' capture and replay of websites, while deploying our resources efficiently. |

| + | |||

| + | We define 'state of art' as the archived website looking and behaving as closely as possible to the live website at the time it was captured, taking into account the limitations of currently available technology and resources. | ||

==Evaluation/Review== | ==Evaluation/Review== | ||

Revision as of 11:30, 8 February 2024

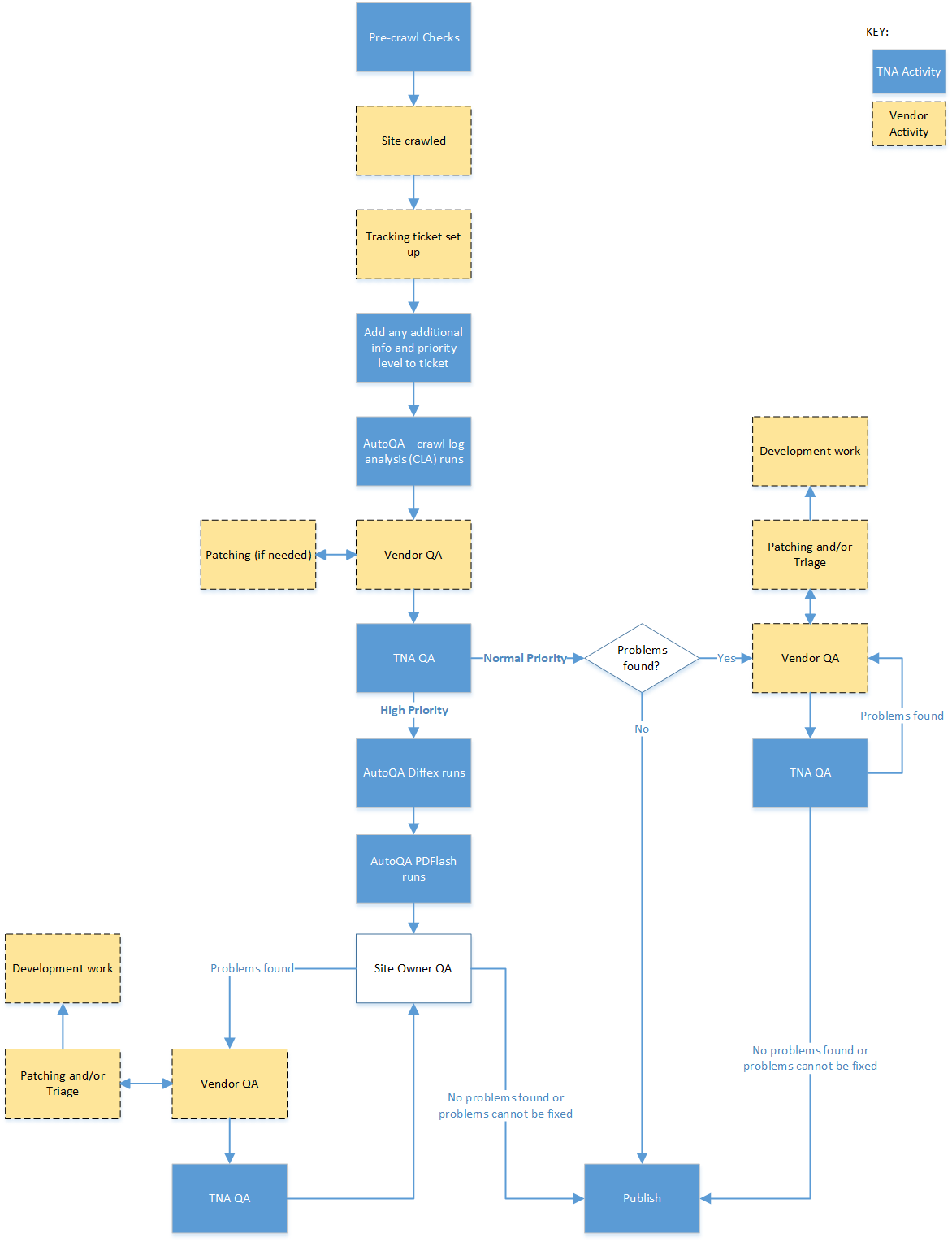

Workflow Description

Pre-crawl checks

A report is produced from WAMDB, our management database, listing all the websites due to be crawled the following month along with useful information held in the database about each site. The list is divided between the web archiving team. The team runs through a list of checks on each website. The aim is to ensure the information we send to the crawler is as complete as possible, so the crawl is ‘right first time’. This helps to avoid additional work and temporal problems which can occur if content is added to the archive later (patching).

Checks include:

- Is the entry url (seed) for the site correct?

- Is the site still being updated or is it ‘dormant’. If it’s not been updated since the previous crawl we’ll postpone the crawl.

- Is there an XML sitemap? Has it changed since the last crawl?

- Update pagination patterns – web crawlers are set to capture sites to a specific number of ‘hops’ from the homepage, if the number of pages in a section is bigger than the number of ‘hops’ we send those links as additional seeds for the crawler.

- Check for new pagination patterns.

- Check for content hosted third party locations (eg. downloads, images).

- Has site been redesigned since previous crawl?

- Are there any specific checks we need to do or information we need to provide at crawl time?

We can also add specific checks for a temporary period. For example, if we need to update the contents of a particular field due to a process change.

Site Crawled

The crawl order is generated as an XML file and sent to our vendor. The vendor launches the crawls.

Tracking and Prioritisation

We currently use JIRA as our tracking system for crawls. As soon as a crawl is launched a JIRA ticket is set up by our vendors, containing basic information about the crawl. All correspondence between TNA and our vendors about the crawl takes place on the JIRA ticket. TNA marks up the JIRA tickets of any crawls which need to be treated as ‘High Priority’. We add a standard label and a descriptive comment.

Common reasons for a site being considered High Priority include:

- Site content is particularly important to the program (e.g. the main central government website: GOV.UK)

- Site is closing or will be redeveloped soon.

- Site is new and we have not crawled it before.

- Site has been redesigned since the previous crawl

- Some functionality did not work in the previous crawl which should now be fixed following a change to the crawl configuration – this needs to be confirmed during quality assurance checks.

Our supplier will also leave a comment in the ticket if a problem is noticed during the crawl – for example if it is becoming much larger than expected or if the crawler is blocked.

Each site has a parent ticket (task) in JIRA and each individual crawl has a child ticket (sub-task). This enables us to record information which applies to all crawls at parent level and to easily move between individual crawl tickets.

Auto-QA – Crawl Log Analysis (CLA)

After the crawl is complete the first stage of our ‘Auto-QA’ runs. It checks all error codes in the crawl.log file to see whether any of the urls are available in the live web. A list of any available urls is attached to the JIRA ticket. Auto-QA is available to all under the MIT Licence: https://github.com/tna-webarchive/open-auto-qa

Vendor QA

Our vendor runs QA checks on all sites, starting with those marked as 'High Priority' in JIRA. They patch in urls identified during the ‘Auto-QA’ process and other missing files often to fix small problems such as missing images or broken formatting. All QA notes are added to the JIRA ticket, including information about problems they have been unable to fix at the QA stage which may be fixed by additional work from their engineering team.

The National Archives Team QA – High Priority Crawls

We run two more parts of the ‘Auto QA’ process. They are only run on high priority crawls as they take a lot of time and resource.

- Diffex – involves running the 'SEO Site Audit Tool’, Screaming Frog, across the live site then checking the outcome against the archive site. A list of urls which were found by Screaming Frog but not in the archived site is attached to the JIRA ticket. TNA QA checks over the list and asks the vendor to patch them in. Diffex is more effective if the live site is dormant for the duration of the archiving process.

- PDF Flash – crawls all pdf files in the archived site, extracts any in-scope hyperlinks and checks them against the archived site. A list of urls missing from the archived site is attached to the JIRA ticket. TNA QA checks over the list and asks the vendor to patch them in.

We also undertake visual QA. This involves visually comparing the live site with the archived site, concentrating on areas which are likely to cause problems: such as interactive content, videos and animations and large publication storage sections. We use information from previous crawls to guide what we check.

If any patching is needed, including from Diffex or PDF Flash, or we find other problems with the archived site, we assign it back to the vendor and ask them to fix. We add detailed descriptions of the problems to JIRA, often illustrated with screenshots or short videos.

The National Archives Team QA – Regular Priority

We undertake visual QA checks only. As with High Priority crawls this involves visually comparing the live site with the archived site, concentrating on areas which are likely to cause problems such as interactive content, videos and animations and large publication storage sections. We also use information from previous crawls to guide what we check and how much checking to do. If no serious problems were found in previous crawls of a site, and no problems with the current were reported from Vendor QA we do not dedicate much QA time.

Supplier Patching & Additional Work

The supplier will do the patching we request. When the work is complete, the ticket will be assigned back to us to check. If we do not think the work is complete, we will assign back to the Vendor to recheck. This will continue until we are happy that the site is as close to ‘state of the art’ as possible. If any problems cannot be resolved by patching, they may suggest that we consider additional work by their engineering team. This is dealt with through a separate workflow.

Site Owner QA for very High Priority Crawls

If we are in contact with the website owner of a site which is closing or being redesigned, we will ask them to check the site before it is published. We believe this is useful as they have the best knowledge of how their site works. We emphasise that it is the site owner’s responsibility to check that content has been captured and works in the archive before removing it from the live web. If site owners find content which is not working, we will ask the Vendor to investigate and fix if possible. We provide guidance on QA for website owners on our web pages: https://www.nationalarchives.gov.uk/webarchive/archive-a-website/how-to-check-your-archived-website/

Publication

When we are happy that a site is as close as possible to ‘state of the art’ we approve it for publication. This is the end of the QA process. Any information from the QA process which may be helpful for future crawls will be added either to the JIRA parent ticket (for example for content which cannot currently be made to work in the archive) or to WAMDB (if it needs to be added to the crawl order next time).

Purpose, Context and Content

The National Archives is responsible for archiving UK central government owned websites. The archived archived websites are made available to the public through the UK Government Web Archive: https://www.nationalarchives.gov.uk/webarchive/

The QA workflow aims to enable us to work with our vendor (and sometimes our stakeholders) to achieve 'state of the art' capture and replay of websites, while deploying our resources efficiently.

We define 'state of art' as the archived website looking and behaving as closely as possible to the live website at the time it was captured, taking into account the limitations of currently available technology and resources.

Evaluation/Review

The QA workflow is effective but is constantly under review. It is one of our central workflows and we seek to continuously improve it.

Further Information

ClaireUKGWA (talk) 15:22, 7 February 2024 (UTC)