Workflow:Cloud-based preservation and access workflow for MXF and MPG video

Workflow Description

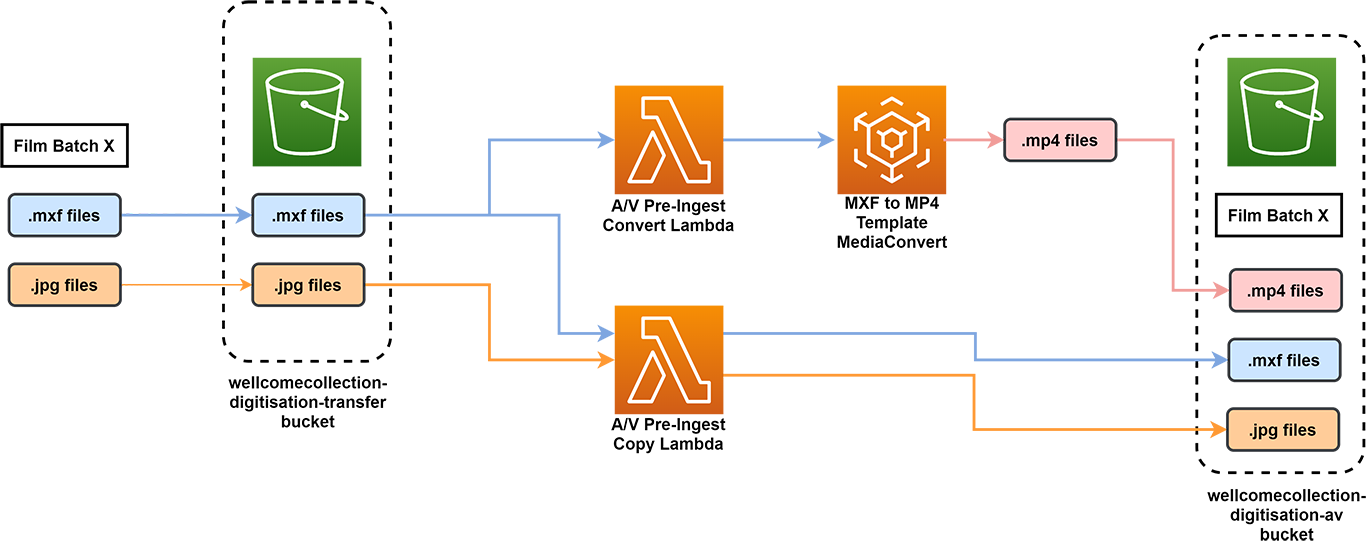

MXF Video Pre-Ingest Workflow

The video pre-ingest workflow converts the .mxf video to .mp4 (to be used as QA and access) and moves all files from a public bucket to a private bucket

- Vendor uploads Film Batch X consisting of .mxf and .jpg post images to the wellcomecollection-digitisation-transfer bucket in AWS S3

- The files arriving in the bucket activate two Lambdas simultaneously

- The A/V Pre-Ingest Copy Lambda copies the .mxf and .jpg files over to a different bucket, the wellcomecollection-av-digitisation bucket, which can only be accessed by Wellcome Staff

- The A/V Pre-Ingest Convert Lambda sends the .mxf to AWS MediaConvert to create an .mp4

- The .mp4 is delivered to the wellcomecollection-av-digitisation bucket alongside the .mxf and .jpgs. The .mp4 will be QA’d and the files remain here until ingest.

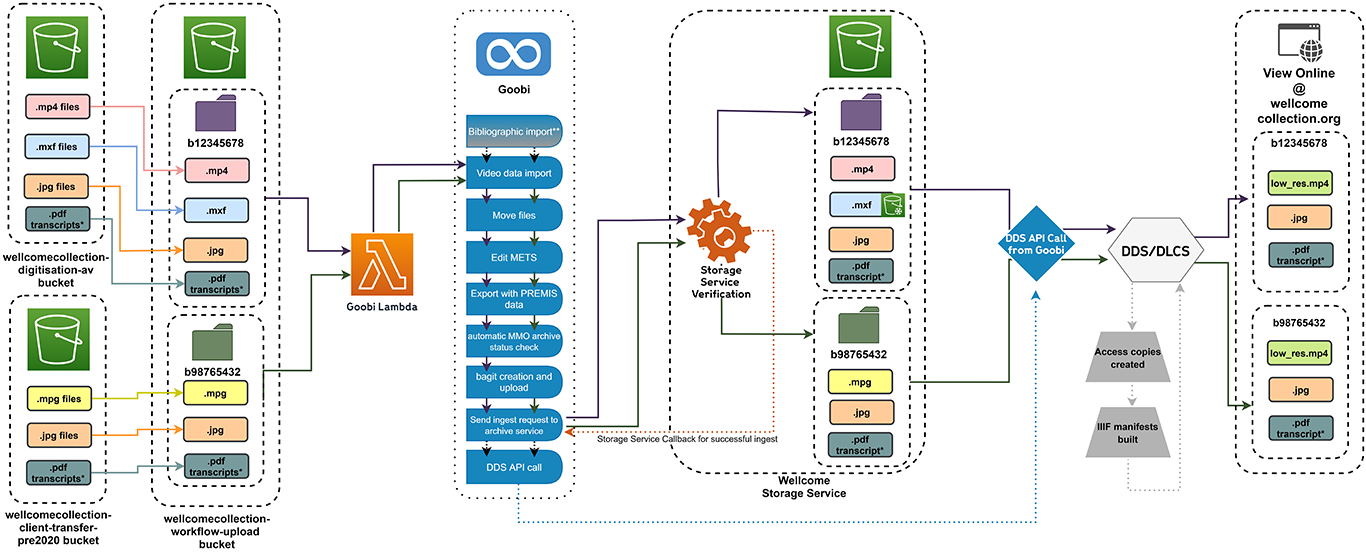

MXF/MP4 or MPG Video Ingest Workflow

This video ingest workflow can be used for either and .mxf/.mp4 and .jpg poster image or an .mpg and .jpg poster image. An optional .pdf transcript can be added to either type of ingest. Both ingest types are sent to the Wellcome Storage Service for preservation and then to DDS/DLCS to be made available for access.

- Copy over an .mxf and .mp4 or an .mpg, with the accompanying .jpg poster image and .pdf (*optional as not always available) transcript from their original bucket into a folder created in the wellcomecollection-workflow-upload bucket. The folder name should match the name of the process title for the item in Goobi. The process title will have been created in Goobi by loading the marc.xml prior to ingest in the **bibliographic import step.

- The upload of the files will trigger the Goobi Lambda which queries Goobi for a process title that matches the name of the folder and will send the files to the process if a match is found

- In Goobi, the user can check that the files are copied over and release the video data import step

- Goobi automatically moves the files into appropriate internal folders (access, preservation, poster, transcript) in preparation for writing the METS filegroups and usage attributes

- At Edit METS, the user must select the license and access status for the film to be written to the METS

- Goobi continues the workflow automatically

- writing PREMIS data to the METS

- checking if the item is a single item or multiple manifestation

- creating a bag

- The bag is sent to the Wellcome Storage Service where it is verified and stored. The .mxf files are automatically life cycled to Glacier Deep Store. The storage sends a callback to Goobi to verify the bag has been stored successfully

- When Goobi gets the call back, it calls the DDS API. DDS (access software) then reads the METS in storage and starts writing a IIIF manifest while DLCS (access file creator) begins making an access copy of the high res .mp4 or .mpg

- When the IIIF manifest and access copies are ready, wellcomecollection.org/collection starts displaying the video

Purpose, Context and Content

This is a Cloud-based, semi-automated pre-ingest workflow for .mxf videos and an ingest workflow for both .mxf and .mpg videos. The previous version of the video workflow was for .mpg videos only and was completely based on on-premises servers.

The pre-ingest workflow was created to handle Wellcome's new film/video digitisation to JPEG2000/MXF format. The ingest workflow was created to handle the new .mxf format as well as a backlog of .mpgs.

For more information about why and how we built this workflow, please see our Wellcome Collection Stacks blog post. The post was written in December 2020. As of February 2021, both the pre-ingest and ingest workflows are in production.

Evaluation/Review

The workflows are in production and are meeting our current requirements for preservation and access. The process will be reviewed and adjusted as required in the future.

Further Information

- Policies and plans

- Digitisation Strategy [PDF]

- Library catalogue

- Digital Engagement blog

- Digital Preservation at Wellcome

- Building Wellcome Collection’s new archival storage service

- How we store multiple versions of BagIt bags

- Large things living in cold places - How we use Glacier Deep Store

- A sprinkling of Azure - How we backed up AWS to Azure

- Our approach to digital verification

- Developer information

- Github site

- Public roadmap

ARay (talk) 13:56, 28 April 2021 (UTC)