Difference between revisions of "Workflow:Workflow for ingesting digitized books into a digital archive"

| Line 16: | Line 16: | ||

* [https://en.wikipedia.org/wiki/Clam_AntiVirus Clam AV] | * [https://en.wikipedia.org/wiki/Clam_AntiVirus Clam AV] | ||

* [[Fedora_Commons]] | * [[Fedora_Commons]] | ||

| − | |organisation=[http://www | + | |organisation=[http://www.unibe.ch/university/services/university_library/ub/index_eng.html Universitätsbibliothek Bern] |

}} | }} | ||

| Line 24: | Line 24: | ||

</div> | </div> | ||

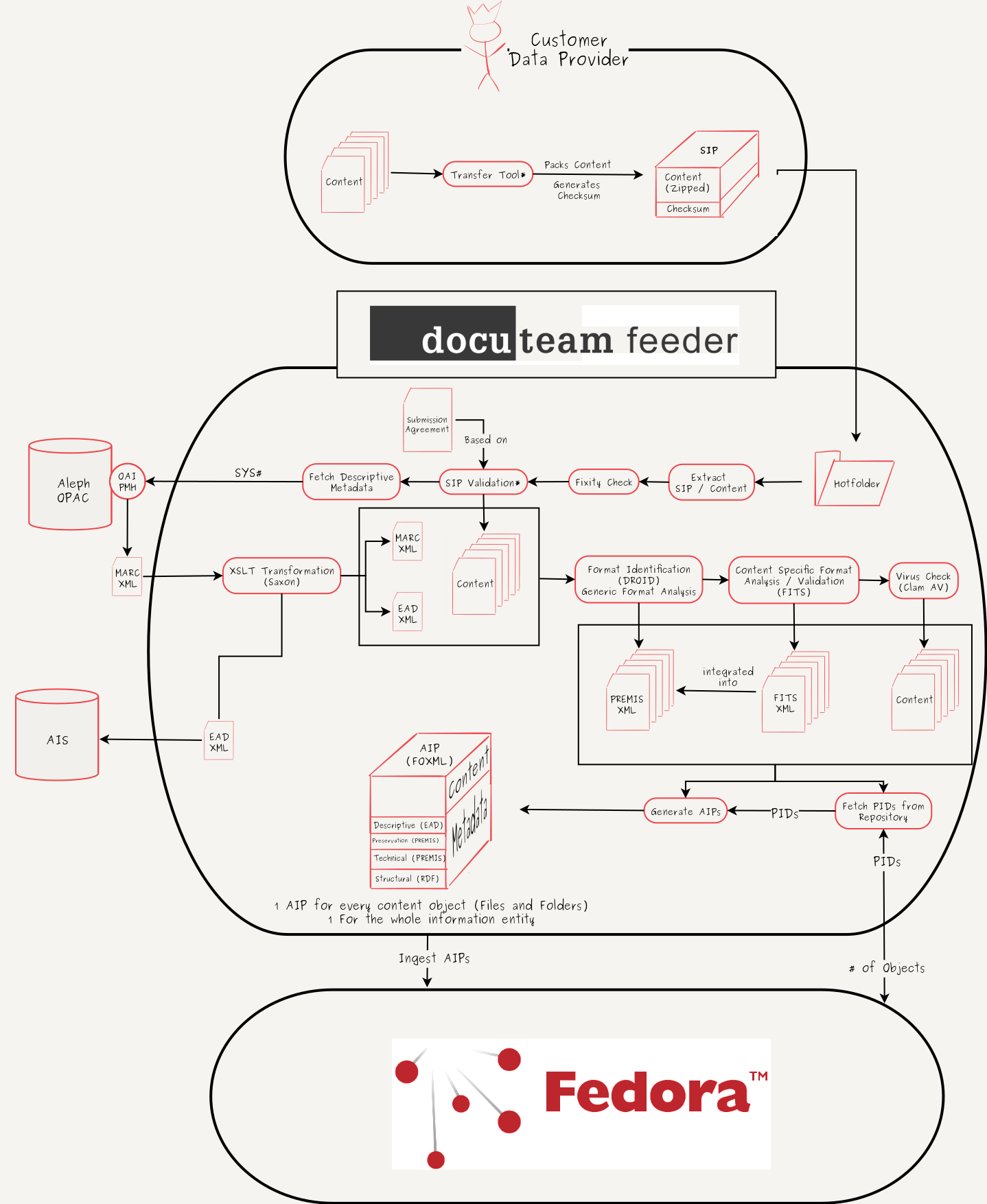

| − | # The data provider provides his content as an input for the transfer tool (currently in development) | + | # The data provider provides his content as an input for the transfer tool (currently in development). |

| − | # The transfer tool creates a zip-container with the content and calculates a checksum of the container | + | # The transfer tool creates a zip-container with the content and calculates a checksum of the container. |

| − | # The zip-container and the checksum are bundled (another zip-container or a plain folder) and | + | # The zip-container and the checksum are bundled (another zip-container or a plain folder) and form the SIP toghether. |

| − | # The transfer tool moves the SIP to a registered, data provider specific hotfolder, which is connected to the ingest server | + | # The transfer tool moves the SIP to a registered, data provider specific hotfolder, which is connected to the ingest server. |

| − | # As soon as the complete SIP has been transfered to the ingest server, a trigger is raised and the ingest workflow starts | + | # As soon as the complete SIP has been transfered to the ingest server, a trigger is raised and the ingest workflow starts. |

| − | # The SIP gets unpacked | + | # The SIP gets unpacked. |

| − | # The | + | # The zip container, that contains the content, is validated according to the provided checksum. If this fixity check fails, the data provider is asked to reingest his data. |

| − | # The content and the structure of the content are validated against the submission agreement, that was signed with the data provider (this step is currently in development) | + | # The content and the structure of the content are validated against the submission agreement, that was signed with the data provider (this step is currently in development). |

| − | # Based on | + | # Based on an unique id (encoded in the content filename) descriptive metadata is fetched from the library's OPAC over an OAI-PMH interface. |

# The OPAC returns a MARC.XML-file. | # The OPAC returns a MARC.XML-file. | ||

| − | # The MARC.XML-file is mapped | + | # The MARC.XML-file is mapped to an EAD.XML-file by a xslt-transformation. |

# The EAD.XML is exported to a designated folder for pickup by the archival information system | # The EAD.XML is exported to a designated folder for pickup by the archival information system | ||

| − | # Every content file is analysed by DROID for format identification and basic technical metadata is extracted (e.g. filesize) | + | # Every content file is analysed by DROID for format identification and basic technical metadata is extracted (e.g. filesize). |

| − | # The output of this analysis is | + | # The output of this analysis is written to a PREMIS.XML-file (one PREMIS.XML per content object). |

# Every content file is validated and analysed by FITS and content specific technical metadata is extracted. | # Every content file is validated and analysed by FITS and content specific technical metadata is extracted. | ||

# The output of this analysis (FITS.XML) is integrated into the existing PREMIS.XML-files. | # The output of this analysis (FITS.XML) is integrated into the existing PREMIS.XML-files. | ||

# Each content file is scanned for viruses and malware by Clam AV. | # Each content file is scanned for viruses and malware by Clam AV. | ||

| − | # For each content object and for the whole information entity (the book) a PID is fetched from the repository | + | # For each content object and for the whole information entity (the book) a PID is fetched from the repository. |

# For each content object and for the whole information entity an AIP is generated. This process includes the generation of RDF-tipples, that contain the relationships between the objects. | # For each content object and for the whole information entity an AIP is generated. This process includes the generation of RDF-tipples, that contain the relationships between the objects. | ||

| − | # The AIPs are ingested into the repository | + | # The AIPs are ingested into the repository. |

| − | # The data producer get's informed, that the ingest finished successfully | + | # The data producer get's informed, that the ingest finished successfully. |

| Line 61: | Line 61: | ||

==Organisation== | ==Organisation== | ||

<!-- Add the name of your organisation here --> | <!-- Add the name of your organisation here --> | ||

| − | [http://www | + | [http://www.unibe.ch/university/services/university_library/ub/index_eng.html Universitätsbibliothek Bern] |

==Purpose, Context and Content== | ==Purpose, Context and Content== | ||

Revision as of 14:30, 7 April 2017

Upload file (Toolbox on left) and add a workflow image here or remove

Workflow Description

- The data provider provides his content as an input for the transfer tool (currently in development).

- The transfer tool creates a zip-container with the content and calculates a checksum of the container.

- The zip-container and the checksum are bundled (another zip-container or a plain folder) and form the SIP toghether.

- The transfer tool moves the SIP to a registered, data provider specific hotfolder, which is connected to the ingest server.

- As soon as the complete SIP has been transfered to the ingest server, a trigger is raised and the ingest workflow starts.

- The SIP gets unpacked.

- The zip container, that contains the content, is validated according to the provided checksum. If this fixity check fails, the data provider is asked to reingest his data.

- The content and the structure of the content are validated against the submission agreement, that was signed with the data provider (this step is currently in development).

- Based on an unique id (encoded in the content filename) descriptive metadata is fetched from the library's OPAC over an OAI-PMH interface.

- The OPAC returns a MARC.XML-file.

- The MARC.XML-file is mapped to an EAD.XML-file by a xslt-transformation.

- The EAD.XML is exported to a designated folder for pickup by the archival information system

- Every content file is analysed by DROID for format identification and basic technical metadata is extracted (e.g. filesize).

- The output of this analysis is written to a PREMIS.XML-file (one PREMIS.XML per content object).

- Every content file is validated and analysed by FITS and content specific technical metadata is extracted.

- The output of this analysis (FITS.XML) is integrated into the existing PREMIS.XML-files.

- Each content file is scanned for viruses and malware by Clam AV.

- For each content object and for the whole information entity (the book) a PID is fetched from the repository.

- For each content object and for the whole information entity an AIP is generated. This process includes the generation of RDF-tipples, that contain the relationships between the objects.

- The AIPs are ingested into the repository.

- The data producer get's informed, that the ingest finished successfully.

List of Tools

- 7-Zip - Pack and unpack content / SIPs

- docuteam feeder - Workflow- and Ingestframework

- cURL - Fetch MARC.XML per http

- Saxon - MARC.XML to EAD.XML mapping

- DROID - Format Identification and generation of basic technical metadata

- FITS_(File_Information_Tool_Set) - Generation of specific, content aware technical metadata

- Clam AV - Virus check

- Fedora_Commons - Digital Repository

Organisation

Purpose, Context and Content

The University Library of Bern digitizes historical books, maps and journals that are part of the "Bernensia" collection (Media about or from authors from the state and city of Bern). The digital object resulting from this digitization process are unique and therefore of great value for the library. To preserve them for the longterm, these objects have to be ingested into the digital archive of the libray. The main goal of this workflow is to provide an easy interface to the data provider (digitization team), to provide a simple gateway to the digital archive and to collect the as much metadata as is available in an automated way.

Evaluation/Review

A subset of the workflow has been setup and tested as a proof of concept on a prototype system. It will be installed and extended in a productive environment shortly .

Further Information

ChrisReinhart (talk) 14:00, 7 April 2017 (UTC)